Hello, I've built a few D/A converters around the PCM1792/1794 in the past and I am perhaps have some trouble realising the performance that these are supposed to be able to achieve. Also, as my knowledge is a little lacking when it comes to the internal workings of D/A converters, I don't know if what I am experiencing is typical of how they perform. So instead of making yet another PCB prototype I figured I'd ask/post here in the hope that someone can help shed some new light on this.

First of all the implementation.

I have the master clock, bit clock, LR clock and the data line being transmitted over a short CAT5 network cable via an LVDS implementation using your own SN65LVDS receiver and transmitters. The received clocks then pass through a ISO7240M chip, to isolate the DAC side of things from the place the clocks are sent from. The clocks then feed into the PCM1792. These are all mounted on the same PCB to minimise EMI.

The PCB is double sided, with the copper bottom acting as a ground plane, this is completely uninterrupted, save for a couple of areas that have been removed to reduce stray capacitance from some areas of the design. All components relevant to the PCM1792 are mounted very close to the pins of the chip and low ESR capacitors have been used in every area, the 0.1uf decouplers are ceramic. There are no traces on the copper bottom.

The copper top is obviously used for component placement, but the area of the copper top directly beneath the PCM1792 is uninterrupted copper., that is there is copper directly beneath the PCM1792. The ground connections from the DAC chip are made directly to this copper on the top. To connect this copper area to the ground plane on the copper bottom I basically have something similar to using a number of vias, only instead of the usual method involved in automated PCB fabrication, I have drilled two 1.5mm holes directly beneath the DAC chip. These two holes have a piece of 1.5mm solid copper rod that connects the copper bottom to the copper top beneath the DAC chip.

The I/V + difference amplifier are essentially identical to the data sheet implementation, except for two things. The first, is the capacitor in parallel with the feedback resistor in the I/V converters. The capacitor is in series with a small resistor to decouple the output of the opamp from the capacitance. The second is that the size of the capacitor in the difference amplifiers filter is larger to lower the frequency the filter operates at.

The PCM1792 is operating with a master clock of 256fs, and is controlled by a PIC24 via I2C. The system is set up so that I can alter the Delta-Sigma oversampling rate by remote control and I can also switch between the fast and slow rate digital low pass filter.

First of all the clocks are low jitter

as can be seen from the attached image, also as can be seen the noise floor is quite low too. So jitter is unlikely to be a cause of any performance issues.

Now some words on the distortion performance.

RE the DAC output set to around -1dB.

Going from a 48khz sample frequency, to 96 khz results in a doubling of THD. Likewise going from a 96khz signal to a 192khz signal also results in a doubling of THD. The over sampling filter is kept the same.

If the sampling frequency is fixed to 48khz, going from a 32x over sampling filter, to a 64x over sampling filter also results in a doubling of THD and going to 128x once again doubles THD over the 64x rate. The same happens @ 96khz and 192 khz too. Each time the sampling frequency is doubled, or the over sampling rate doubles the THD doubles too. (Sometimes it's not quite double, but it's close enough to see a trend emerging.)

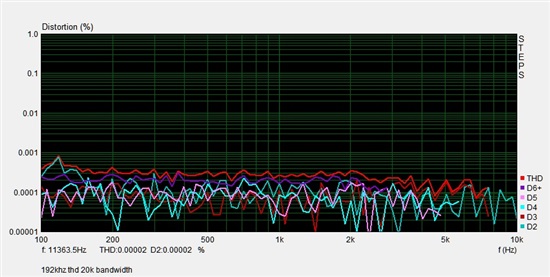

Now @ -1dB and using an over sampling of 32x I get roughly 0.0005%, 0.001% and 0.002% for 48/96/192khz, both the left and the right channel show very similar performance. This isn't limited to low order harmonics either as the next picture demonstrates.

The distortion to me seems a tad too high in level and if it were just for this I wouldn't be posting here as things start to get worse or confusing depending on various things.

For example the above performance is achieved when using the AD8610 opamp in the position of the I/V converter (an OPA627 is used as the difference amplifier). However if I change the I/V opamp to the THS4031 (what I was originally using) the performance degrades significantly, however it also shows exactly the same trends with respect to the over sampling rate and the sampling frequency used (192khz reaching 0.005% THD with 32x OS and going up to 0.01% with 64x).

A while ago I also built a DAC using the PCM1794, this implementation used a SSOP to DIL adaptor, so theoretically loses out straight away due to the adaptor. However in that design I used the THS4031 in exactly the same configuration and it performed fine. The peculiar thing is that the design using the SSOP>DIL adaptor performed far better then any of the designs I've done where the PCM1794/2 is mounted directly to the PCB. Even stranger is that the analogue stage of each design, (design 1 being with the adaptor, design 2 being without) was identical, so quite why it performed well in the adaptor version and poorly in the version without the adaptor I do not know. (By perform well I am talking 0.0003% distortion at 48khz @ -1dB output level). So I am assuming here that the I/V stage using the THS4031 isn't or shouldn't be a problem.

Here's where things perhaps become even stranger.

According to the datasheet, the distortion performance reaches a minimum at around -20dB, where presumably, up until this point all the distortion was in the noise floor. Then as the output level increases, the distortion also increases, but both do so at an identical rate so the % distortion remains the same. This all makes sense, but isn't what happens with me.

If I lower the output level down from -1dB to -10dB the distortion remains roughly the same, it decreases, but only by a tiny bit. Say from 0.0018% to 0.0015% for a sampling frequency of 192khz and an OS rate of 32x.

If I then lower the output level from -10dB to -14dB, it decreases down to 0.001%.

If I then lower the output from -14dB down to -18dB something interesting happens. The distortion @ all sampling frequencies and pretty much all OS rates just takes a vacation and looks something likes this.

Interested by this I ran a sweep from 100-20khz and came up with this.

Obviously this isn't just frequency related, so probably isn't a result of any inductive or capacitive coupling. Nor would I imagine contamination of an analogue signal line by an a ground current.

In trying to figure this out some what, I have tried altering the component values of the I/V and difference amplifiers so that the op amps will see easier loads. This didn't do anything, I wasn't surprised.

What is also interesting is that when using the THS4031s in the I/V stage exactly the same thing happens. The distortion goes from being quite high (0.005% @ 192khz @32x OS) to almost invisible. It's almost as if there's some threshold point inside the DAC chip, and regardless of what I/V stage it is driving, when the driven level drops below it the distortion disappears.

I thought this might be due the ADC of the sound card I am using to do the measurements, so I attenuated the output of the difference amplifiers by 20dB using a resistor divider and with the DAC set at -1dB output the high distortion was still there, so it's a product of the output level of the DAC and not the measurement system.

I am thinking of trying a different I/V configuration using an OPA1632 fully differential opamp as an I/V converter and then feeding that into the OPA627, but something tells me that that wont solve the problem, does this sound like an issue that anyone has dealt with before? Or rather understands how DACs work internally and can perhaps understand what could be causing this? I have tried around 7 different PCBs now, with different grounding, slightly different signal routing etc and nothing appears to work, it seems like I am missing something fundamental.

Many thanks in advance if anyone can offer some assistance.

Matt.

It is worth mentioning again I believe, that if I use the THS4031 as an I/V converter the graphs look pretty much exactly the same, except that the rise in distortion at the end is far worse.

It is worth mentioning again I believe, that if I use the THS4031 as an I/V converter the graphs look pretty much exactly the same, except that the rise in distortion at the end is far worse.