Hi,

Like quite a few others on the forum, I'm trying to get MessageQ between Linux on the ARM cores and Sys/BIOS on DSP cores working, in my case on an EVMK2H eval board, rev 3.0

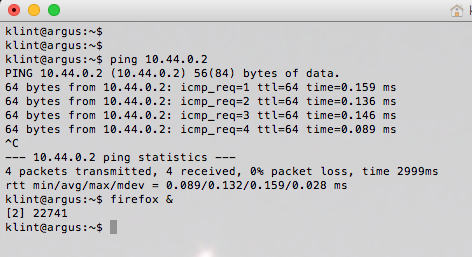

Currently I am able to build an application pair (Linux + DSP) by rebuilding ipc using "make -f ipc-bios.mak" and "make -f ipc-linux.mak" in the ipc installation directory which gives me MessageQBench and messageq_single.xe66

Loading and starting messageq_single.xe66 on all 8 dsp cores and running MessageQBench from Linux command line works and reports round trip times of just over 60 microseconds. So far so good.

What I now try is to rebuild (and as a next step modify) the DSP application in CCStudio. I have created a new Sys/BIOS application using the SYS?BIOS -> Ti target examples -> Typical template, replaced the default app.cfg with rpmsg_transport.cfg and messageq_common.cfg.xs and the default main.c with (by linking) messageq_single.c - all files from packages/ti/ipc/tests under the ipc installation directory. This project builds cleanly, but when i try to run the application pair with the built binary the dsp application hangs (timeout) waiting for the first message from the host ("Awaiting sync message from host...")

The choice of rpmsg_transport.cfg as config file for messageq_single,c was based on this answer: https://e2e.ti.com/support/embedded/tirtos/f/355/p/343362/1204552#1204552

The LAD daemon is running and even when the dsp application hangs the host have been able to find the right queue on core0 (part of log file included below)

Since I am able to build the dsp application using make, it seems to be the configuration in the CCS project that is the problem. I have tried - in vain - to figure out the actual configuration used when building with make -f ipc-bios.mak

Key points of our installation:

CCS Version: 6.0.1.00040

MCSDK 3_01_03_06

IPC 3_35_01_07

Any help on getting this resolved would be most appreciated. I have spent far more time than I am prepared to admit trying to get what I regard as basic infrastructure on a SoC working and am quite surprised and disappointed that there isn't a well documented and stable example to use as a staring point for application development in this setup. A Linux application on SMP-configured ARM using a "farm" of DSPs for heavy numeric processing seems like a very natural architecture on a potentially very competent hardware and I believe it is worthy of better support to get developers started.

Regards,

/Anders Klint

Last part of LAD log file:

[8732.922874] NameServer: waiting for unblockFd: 2, and socks: maxfd: 21

[8732.923855] LAD_NAMESERVER_GETUINT32: calling NameServer_getUInt32(0x1b4e8, 'SLAVE_CORE0')...

[8732.923885] NameServer_getLocal: entry key: 'SLAVE_CORE0' not found!

[8732.923932] NameServer_getRemote: Sending request via sock: 5

[8732.923952] NameServer_getRemote: Requesting from procId 1, MessageQ:[8732.923971] SLAVE_CORE0...

[8732.924004] NameServer_getRemote: pending on waitFd: 4

[8732.924133] NameServer: back from select()

[8732.924159] NameServer: Listener got NameServer message from sock: 6!

[8732.924186] listener_cb: recvfrom socket: fd: 6

[8732.924206] Received ns msg: byteCount: 484, from addr: 61, [8732.924224] from vproc: 0

[8732.924242] NameServer Reply: instanceName: MessageQ, name: SLAVE_CORE0[8732.924261] , value: 0x10080

[8732.924286] NameServer: waiting for unblockFd: 2, and socks: maxfd: 21

[8732.924297] NameServer_getRemote: Reply from: 1, MessageQ:[8732.924378] SLAVE_CORE0, value: 0x10080...

[8732.924397] value = 0x10080

[8732.924412] status = 0

[8732.924429] DONE

[8732.924445] Sending response...

[8732.924469] Retrieving command...

Last part of output from MessageQBench:

dsp6 is in running state

load succeeded

run succeeded

dsp7 is in running state

Running MessageQBench:

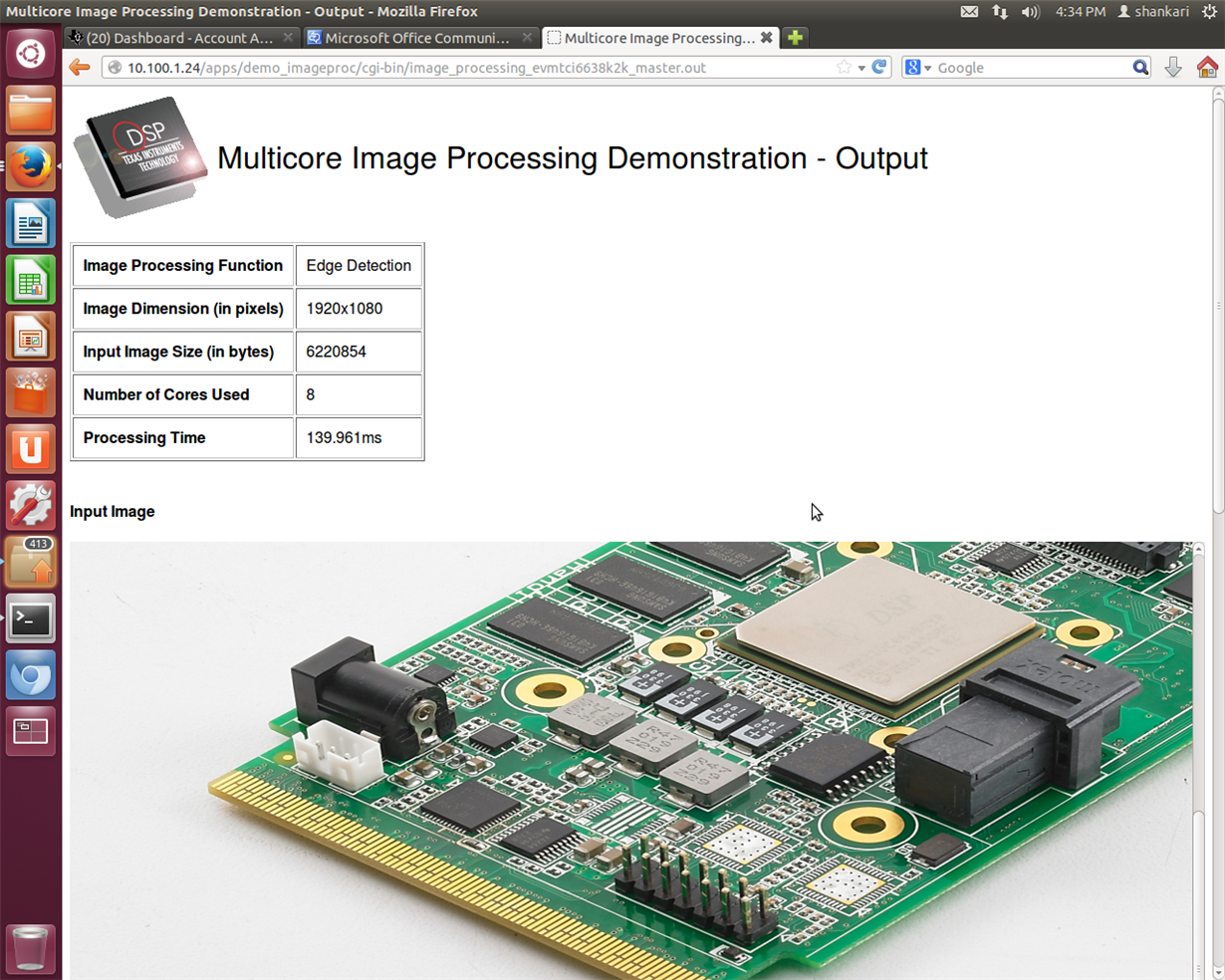

Using numLoops: 1000; payloadSize: 8, procId : 1

Entered MessageQApp_execute

Local MessageQId: 0x88

Remote queueId [0x10080]