Hello!

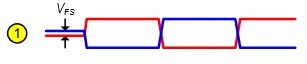

I use same one shot autodirection control schematic in my device as in TIDA-00333. According to this method RS485 line reaches full -1.5 V (driver output on A and B lines) durind 0 transmission, but reaches only litle bit more than 200 mV (failsafe bias level) during 1 transmitssion instead of minimum 1.5 V (min driver output) according RS485 standart. Does it decrease robustness of such RS485 design on long distances compared to standart RS485 implementation with DE (driver enable) controled by PC or MCU during entire 8 bit transmissin?

Best regards!

-

Ask a related question

What is a related question?A related question is a question created from another question. When the related question is created, it will be automatically linked to the original question.