Hi TI E2E Team,

Good Day.

As it was stated in RTLS > Time of Flight > Theory of Operation > Accuracy ,

the variance pertaining to the accuracy of the TOF measurements is contributed by the factors below:

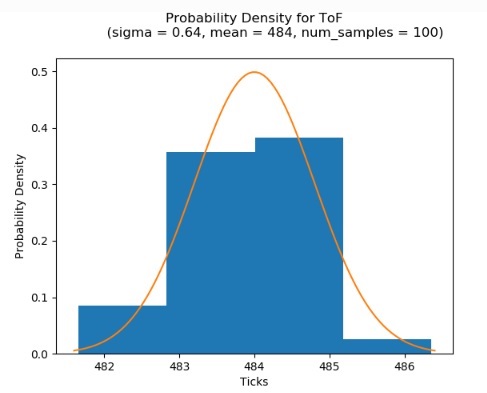

It was also stated that the resulting standard deviation of the TICK offset (due to the above factors) is observed by TI to be 0.64. It was also shown in the ToF Probability Density chart below:

I have some inquiries about this as follows:

1.) Statistically, what does this “sigma = 0.64” exactly mean?

a.) Is this “sigma = 0.64” taken from a single device only?

b.) Is this “sigma = 0.64” taken from devices from a single production lot (or batch) only?

c.) Is this “sigma = 0.64” taken from devices from a multiple production lots?

2.) It this “sigma = 0.64” a fix value over the production lifetime of the TI device?

3.) Is it possible to calibrate the TI device, in order to set a “sigma = 0.64” in all devices during a mass production scenario?

4.) Has TI tried to mathematically (not statistically) calculate the min-max range of the following factors below:

Hoping for your enlightenment.

Best Regards,