Hello,

I am using an LM5118 circuit with the following characteristics and part values:

Vout = ~21.4V

Fsw = 491.5kHz (i.e., 10kohm timing resistor)

Rsense = 8 mohms

Cramp = 470pF (chosen at the next standard value below the calculated recommended value due to the high output voltage and need for additional slope compensation as discussed in the datasheet)

VCCX = ~10.5V

The compensation network is such that the calculated Phase Margin in both buck and buck-boost is solid.

A few devices have recently exhibited the following behavior described below. I'm trying to determine if it's just a bad batch of ICs (on 1 of the few, the IC was replaced and the behavior went away), or if there is a design issue I need to be addressing that is making things marginal.

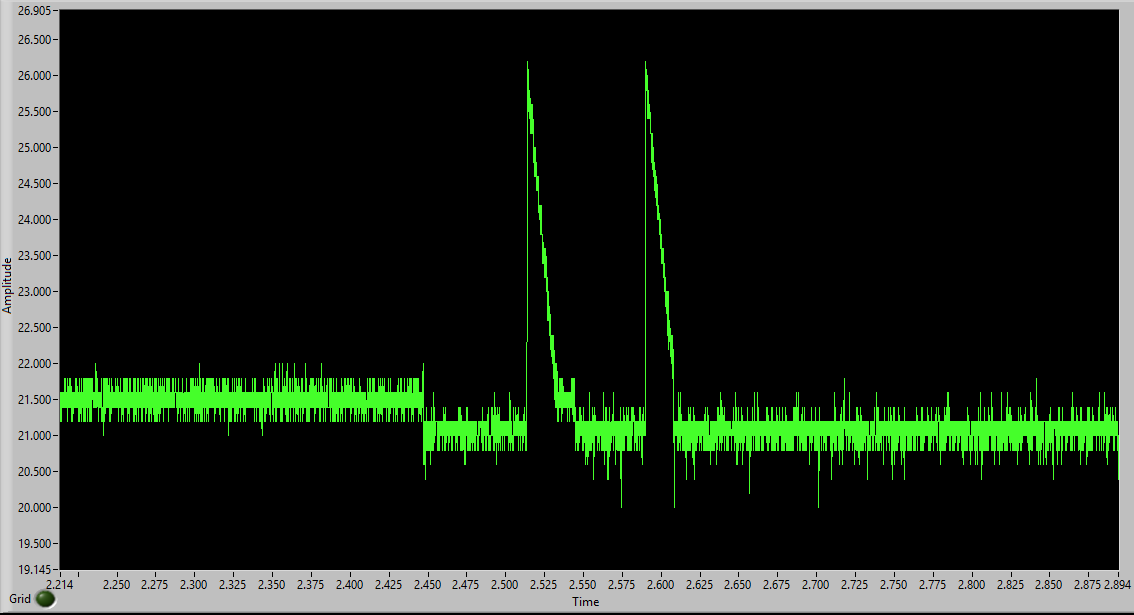

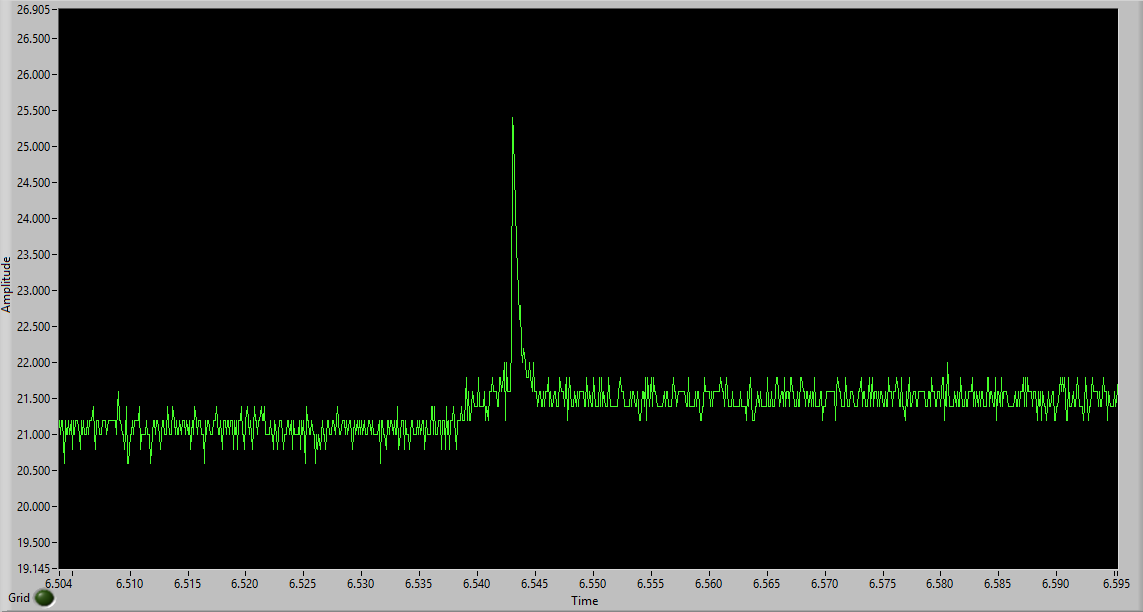

When switching from a light load (less than 100mA) to about a 7 ohm load, the voltage dips a few percent and stays there until the load starts to decay (i.e., slowly turn off over a few ms). At that point, the output drifts back up before spiking by several volts. It can also spike by several volts immediately upon the load being removed quickly as well.

This behavior has occurred at input voltages ranging from about 27.4V to 28.2V. Granted, at the highest end of that range, the voltage doesn't dip the few percent, but it still has an excessive spike when removing a load, compared to normal behavior.

Here are some screenshots of the output voltage. The first is when the load is applied, with some intentional "bounce" of the load being removed and reapplied to show the excessive spike. The second is when the load is decaying, showing the drift back up and spike.

Is there a good explanation for this type of behavior? Or did something break inside the ICs?

Thanks,

John