Part Number: MSPM0G1507

Tool/software:

Hi, I'm checking the minimum sampling time for a best high resolution acquisition of a A/D source and got in trouble with some information about Ti documents.

The first question is what is the settling error time parameter? The only detail about this is showed on Temperature Sensor settling error time; and for other types of generic A/D input?

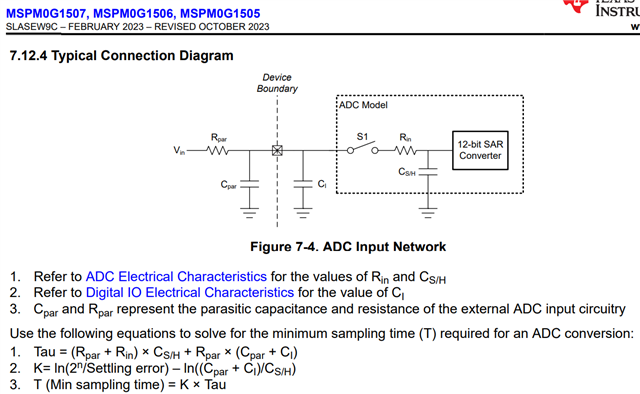

The second question is that is a different approach in the hardware design guide, follows:

The approach is not the same, the settling error is changed and used as a fix value (this not match the temperature settling error of 10us by the way).

Could anyone help me understand which of these equations is the best approach and why these differences?