Part Number: AM62A1-Q1

Tool/software:

Hi Champs,

Customer has experimented with this tool https://github.com/TexasInstruments/edgeai-tensorlab/tree/main/edgeai-modelmaker and found the following problem:

Quote"

I wanted to use the training data from this site https://universe.roboflow.com/search?q=traffic%2520light and it works because the descriptions are an acceptable COCO format.

Unfortunately, after placing the model in AM62A in the model_zoo directory and running with the test video, I did not get any effect. The model does not recognize anything.

Therefore, I performed another test:

- Training the model with sample data from TI. I used input_data_path: 'http://software-dl.ti.com/jacinto7/esd/modelzoo/08_06_00_01/datasets/tiscapes2017_driving.zip'

and config_detection.yaml script.

- I moved the trained model to the model_zoo directory as the 20250428-145500_yolox_nano_lite_onnxrt_AM62A

- I ran the test with the test video and recording the result as output. My script attached as test1.yaml

- The model does not recognize anything. See tiscapes2017_driving.mp4

- I attach logs from training the model logi.txt and run.log

My question is if I have forgotten something and what the problem could be?

BTW

As far as I can see, the model is trained to the universal onnx format. Is it possible to simulate this model outside the system i.e. AM62A ?

https://dev.ti.com/modelcomposer it is still only available in the older version 9.1

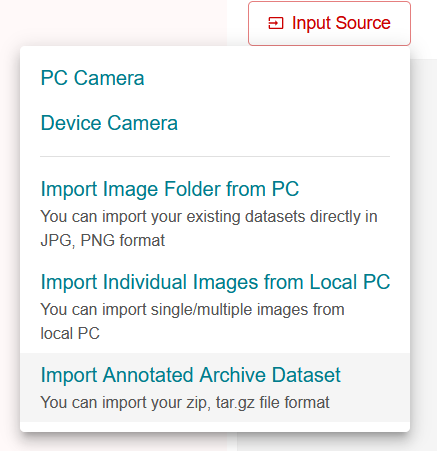

Is there a converter from the COCO format to a format that is supported by https://dev.ti.com/edgeaistudio/

"

(py310) ciupakt@RZE-CIUPAKTLX-N:~/Projects/edgeai-tensorlab/edgeai-modelmaker$ ./run_modelmaker.sh AM62A config_detection.yaml

Number of AVX cores detected in PC: 12

AVX compilation speedup in PC : 1

Target device : AM62A

PYTHONPATH : .:

TIDL_TOOLS_PATH : ../edgeai-benchmark/tools/tidl_tools_package/AM62A/tidl_tools

LD_LIBRARY_PATH : ../edgeai-benchmark/tools/tidl_tools_package/AM62A/tidl_tools:

argv: ['./scripts/run_modelmaker.py', 'config_detection.yaml', '--target_device', 'AM62A']

---------------------------------------------------------------------

INFO: ModelMaker - task_type:detection model_name:yolox_nano_lite dataset_name:tiscapes2017_driving run_name:20250428-145500/yolox_nano_lite

- Model: yolox_nano_lite

- TargetDevices & Estimated Inference Times (ms): {'TDA4VM': 3.74, 'AM62A': 8.87, 'AM67A': '8.87 (with 1/2 device capability)', 'AM68A': 3.73, 'AM69A': '3.64 (with 1/4th device capability)', 'AM62': 516.15}

- This model can be compiled for the above device(s).

---------------------------------------------------------------------

INFO: ModelMaker - dataset split sizes {'train': 393, 'val': 107}

INFO: ModelMaker - max_num_files is set to: 10000

INFO: ModelMaker - dataset split sizes are limited to: {'train': 393, 'val': 107}

INFO: ModelMaker - dataset loading OK

loading annotations into memory...

Done (t=0.06s)

creating index...

index created!

loading annotations into memory...

Done (t=0.01s)

creating index...

index created!

INFO: ModelMaker - run params is at: /home/ciupakt/Projects/edgeai-tensorlab/edgeai-modelmaker/data/projects/tiscapes2017_driving/run/20250428-145500/yolox_nano_lite/run.yaml

INFO: ModelMaker - running training - for detailed info see the log file: /home/ciupakt/Projects/edgeai-tensorlab/edgeai-modelmaker/data/projects/tiscapes2017_driving/run/20250428-145500/yolox_nano_lite/training/run.log

TASKS TOTAL=1, NUM_RUNNING=0: 100%|██████████████████████████| 1/1 [03:54<00:00, 234.09s/it, postfix={'RUNNING': [], 'COMPLETED': ['yolox_nano_lite']}]

Trained model is at: /home/ciupakt/Projects/edgeai-tensorlab/edgeai-modelmaker/data/projects/tiscapes2017_driving/run/20250428-145500/yolox_nano_lite/training

SUCCESS: ModelMaker - Training completed.

INFO: ModelMaker - running compilation - for detailed info see the log file: /home/ciupakt/Projects/edgeai-tensorlab/edgeai-modelmaker/data/projects/tiscapes2017_driving/run/20250428-145500/yolox_nano_lite/compilation/AM62A/work/od-8200/run.log

INFO:20250428-145856: number of configs - 1

TASKS TOTAL=1, NUM_RUNNING=0: 100%|█████████████████████████████████████| 1/1 [03:56<00:00, 2.01s/it, postfix={'RUNNING': [], 'COMPLETED': ['od-8200']}]

SUCCESS: Benchmark - completed: 1/1

TASKS TOTAL=1, NUM_RUNNING=0: 100%|████████████████████████████████████| 1/1 [03:57<00:00, 237.05s/it, postfix={'RUNNING': [], 'COMPLETED': ['od-8200']}]

INFO: packaging artifacts to /home/ciupakt/Projects/edgeai-tensorlab/edgeai-modelmaker/data/projects/tiscapes2017_driving/run/20250428-145500/yolox_nano_lite/compilation/AM62A/pkg please wait...

SUCCESS:20250428-150254: finished packaging - /home/ciupakt/Projects/edgeai-tensorlab/edgeai-modelmaker/data/projects/tiscapes2017_driving/run/20250428-145500/yolox_nano_lite/compilation/AM62A/work/od-8200

Compiled model is at: /home/ciupakt/Projects/edgeai-tensorlab/edgeai-modelmaker/data/projects/tiscapes2017_driving/run/20250428-145500/yolox_nano_lite/compilation/AM62A/pkg/20250428-145500_yolox_nano_lite_onnxrt_AM62A.tar.gz

SUCCESS: ModelMaker - Compilation completed.

2185.run.loghttps://e2e.ti.com/cfs-file/__key/communityserver-discussions-components-files/791/test1.yaml