Part Number: TDA4VH-Q1

Tool/software:

Hi,

I am trying to mix multiple videos using gstreamer compositor, when i combine fullHD videos using gstreamer, CPU utilization is increased. Please suggest TDA4AH has any hardware accelerator for gstreamer video mixing. o

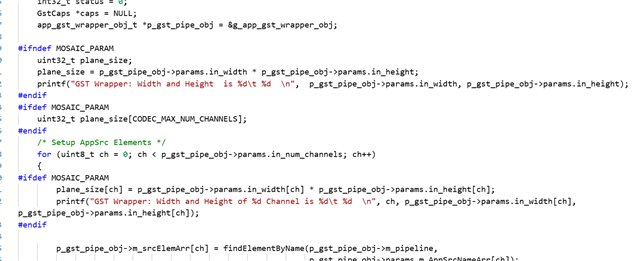

I am using "gst-launch-1.0" command with "compositor" to mix multiple "appsrc" elements.