Good morning.

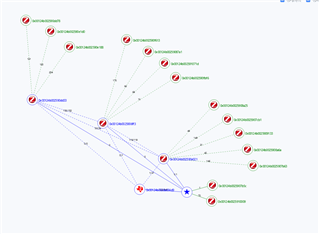

We are testing a custom application involving some custom firmware based on zr_genericapp and zed_genericapp, our last setup was represented by the following topology:

In which there are 14 custom ZEDs, 3 custom ZRs and 1 default ZR, each router is spaced out around 40 meters in a straight line from the others, while the end devices are placed in the path between the routers.

Each device is set to transmit at 20dBm, and we are using some dipole ipex antennas in an almost rural area.

The custom devices contains 8 endpoints of type Smart Plug.

My goal is to measure the poll time of each metering measure of each endpoint (currentSummDelivered of cluster seMetering).

The problems i have found are mainly the following:

- I paired all the devices in my lab, so the end-devices have paired to some parents in a temporary way differently then the final topology, thinking that they would change parents in field finding the best ones: and this correctly happened just for 7-8 of the ZEDs, the remaining ones finally resulted to be disconnected in the networkmap. So i had to walk to the disconnected ones in order to reset and re-pair them, which resulted in the correct final topology. What am i missing? I have avoided the in-field pairing because tipically it requires a variable number of retries (factory reset and pair button press) as almost never the pairing and interview go well at the first attempt, while in lab (on desktop), they always complete successfully.

- As i have the desired topology, i run a script of mine in order to poll each device endpoint (8 for each device) for its 2 cluster attributes of my interest, which are currentSummDelivered and OnOff: the latencies that i'm experiencing are not very good, the needed time to get the full meters/onOff is around 1m 33s polling for 8 endpoints, and 24s polling for 1 endpoint (See log).

Field test, top 5, 3 routers, 14 end devices, 1 endpoint polled for each device //////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////// Device: 0x00124b0025906ff3 [Router], Time elapsed: 104.0501ms, Average per attribute : 52.02505ms Device: 0x00124b00259087a1 [EndDevice], Time elapsed: 111.1384ms, Average per attribute : 55.5692ms Device: 0x00124b0025908a6a [EndDevice], Time elapsed: 110.2387ms, Average per attribute : 55.11935ms Device: 0x00124b0025907cb1 [EndDevice], Timed out Device: 0x00124b002590e021 [Router], Time elapsed: 794.8357ms, Average per attribute : 397.41785ms Device: 0x00124b002590dd33 [Router], Timed out Device: 0x00124b002590e188 [EndDevice], Timed out Device: 0x00124b0025910009 [EndDevice], Time elapsed: 132.7311ms, Average per attribute : 66.36555ms Device: 0x00124b0025907b5c [EndDevice], Time elapsed: 435.9508ms, Average per attribute : 217.9754ms Device: 0x00124b0025908a25 [EndDevice], Time elapsed: 319.9962ms, Average per attribute : 159.9981ms Device: 0x00124b002590f613 [EndDevice], Time elapsed: 381.8213ms, Average per attribute : 190.91065ms Device: 0x00124b0025909133 [EndDevice], Time elapsed: 282.9713ms, Average per attribute : 141.48565ms Device: 0x00124b0025907b63 [EndDevice], Time elapsed: 151.1411ms, Average per attribute : 75.57055ms Device: 0x00124b002591071d [EndDevice], Time elapsed: 157.2716ms, Average per attribute : 78.6358ms Device: 0x00124b002590e1d0 [EndDevice], Timed out Device: 0x00124b002590dd78 [EndDevice], Time elapsed: 645.0995ms, Average per attribute : 322.54975ms Device: 0x00124b002590fbf6 [EndDevice], Time elapsed: 467.6726ms, Average per attribute : 233.8363ms Full poll time: 23.9009177s //////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////// Field test, top 5, 3 routers, 14 end devices, 8 endpoints polled for each device //////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////// Device: 0x00124b0025907b63 [EndDevice], Time elapsed: 1.4851941s, Average per attribute : 92.824624ms Device: 0x00124b0025910009 [EndDevice], Time elapsed: 785.6044ms, Average per attribute : 49.100267ms Device: 0x00124b002590e021 [Router], Time elapsed: 3.1569569s, Average per attribute : 197.3098ms Device: 0x00124b0025908a6a [EndDevice], Time elapsed: 1.4947743s, Average per attribute : 93.423387ms Device: 0x00124b0025909133 [EndDevice], Time elapsed: 3.2124645s, Average per attribute : 200.779026ms Device: 0x00124b002590e1d0 [EndDevice], Time elapsed: 1.8394391s, Average per attribute : 114.964936ms Device: 0x00124b0025906ff3 [Router], Time elapsed: 3.4791768s, Average per attribute : 217.448546ms Device: 0x00124b002590dd33 [Router], Timed out Device: 0x00124b0025908a25 [EndDevice], Time elapsed: 1.5380671s, Average per attribute : 96.129187ms Device: 0x00124b002590dd78 [EndDevice], Time elapsed: 2.8397282s, Average per attribute : 177.483006ms Device: 0x00124b0025907cb1 [EndDevice], Timed out Device: 0x00124b002590fbf6 [EndDevice], Timed out Device: 0x00124b00259087a1 [EndDevice], Time elapsed: 1.0135207s, Average per attribute : 63.345037ms Device: 0x00124b002591071d [EndDevice], Time elapsed: 703.0589ms, Average per attribute : 43.941175ms Device: 0x00124b002590f613 [EndDevice], Time elapsed: 1.1584657s, Average per attribute : 72.4041ms Device: 0x00124b002590e188 [EndDevice], Time elapsed: 1.8645467s, Average per attribute : 116.534165ms Device: 0x00124b0025907b5c [EndDevice], Time elapsed: 2.1748023s, Average per attribute : 135.925138ms Full poll time: 1m33.2618987s ////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

So, in this test, the devices with smart plug endpoints were just 17 (3 custom routers + 14 custom end-devices), but we would like to make a single coordinator handle around 200 of them, carefully partitioned in routers and end-devices, in order to avoid the require of too many router-router hops (wich we experimented to be limited to 10).

In other words, we would like to take the topology in picture, raise the device number to 30, and make it like a brench of a star centered in the coordinator and having, for example, 6 of those branches.

How can it scale? Can we hope to poll the full metering and onOff attributes of each endpoint of each device in a time falling in the ten of minutes? And for the actuation, can we expect to command an ''On'' or ''Off'' command in a reliable way having it previous and next state confirmed in a time below a second?

Is this kind of application really suitable to be done with the Zigbee protocol? Or maybe we have to evaluate some WiFi modules? Replacing the coordinator with an access point, zigbee routers with WiFi repeaters and end-devices with client nodes.

Any advice will be very appreciated.

Thank you again for the extremely ready and prepared support.

Roberto