Other Parts Discussed in Thread: ADS5500, ADS42JB69, ADS42LB69

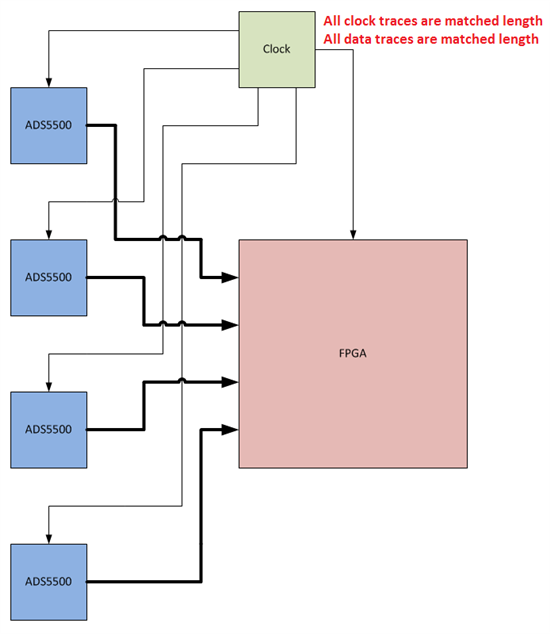

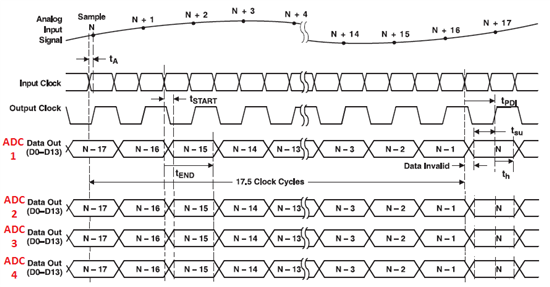

If I have two ADS5500 digitizers with DLL enabled both running off a common clock and measuring the same input signal will the relative phase of the two digital outputs have a fixed relationship from one power up to the next. There is an attached sketch showing the setup.