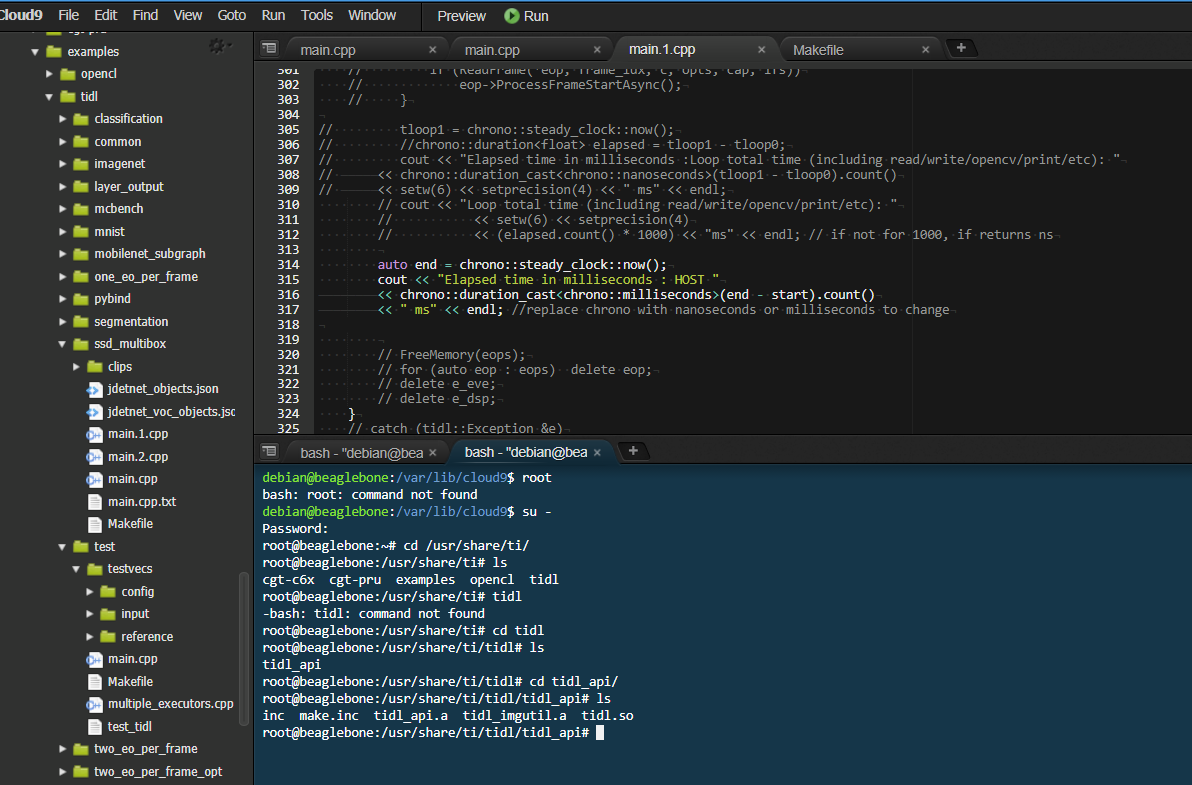

I am working with Beaglebone AI, which has its CLOUD 9 IDE, and the software tool is TIDL with OpenCL and c++ wrappers.

My question is -

In the neural network models implemented using TIDL, How do measure the host and co-processor runtime/execution time separately? Using what concept?

In general OpenCL code like vecadd (vector addition) , I was able to make used profiling events to measure the runtime, but I have no idea how to do the same with imagenet or ssd_multibx codes. Please help...